窗口

在Flink中窗口的作用实际上是将无限的数据流基于固定时间或者固定数量切分为各个有界的数据集合,然后在对这些数据进行聚合运算,从而获得一定范围时间内的数据统计结果。

在Flink的DataStream中的API已经包含了大多数的窗口算子,其中包含了

stream

.keyBy(...) //key类型数据集

.window(...) //需要指定窗口分配器类型

[.trigger(...)] //需要指定触发器类型 (或者默认)

[.evictor(...)] //需要指定驱逐器类型 (或者没有)

[.allowedLateness(...)] //需要指定是否延迟处理数据 (或者为0s)

[.sideOutputLateData(...)] //可选

.reduce/aggregate/fold/apply() //(指定窗口计算函数)

[.getSideOutput(...)] //指定tag输出数据Windows Assigner:指定窗口的类型,定义如何将数据流分配到一个或多个窗口;

Windows Trigger:指定窗口触发的时机,定义窗口满足什么样的条件触发计算;

Evictor:用于数据剔除;

Lateness:标记是否处理迟到数据,当迟到数据到达窗口中是否触发计算;

Output Tag:标记输出标签,然后在通过getSideOutput将窗口中的数据根据标签输出;

Windows Funciton:定义窗口上数据处理的逻辑,例如对数据进行sum操作

以上基本上是Flink涉及到窗口时编程的套路,这个后续会详细介绍。下面简单介绍一下Flink的窗口类型。

Windows Assigner

在Flink中支持两种类型窗口:

- 基于时间戳范围内的窗口(左闭右开)

- 基于固定数量的窗口。

基于时间范围内的

package com.hph.window;

import org.apache.flink.api.common.ExecutionConfig;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.typeutils.TypeSerializer;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.assigners.WindowAssigner;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.triggers.Trigger;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.streaming.api.windowing.windows.Window;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import java.text.SimpleDateFormat;

import java.util.Collection;

import java.util.Collections;

import java.util.Date;

import java.util.Random;

public class MyTimeWindow {

private static transient KafkaConsumer<String, String> consumer;

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口 每5秒滑动一次

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

}).timeWindow(Time.seconds(5)).sum(1).print();

environment.execute("MyWaterMarkAndTimestamps");

}

}

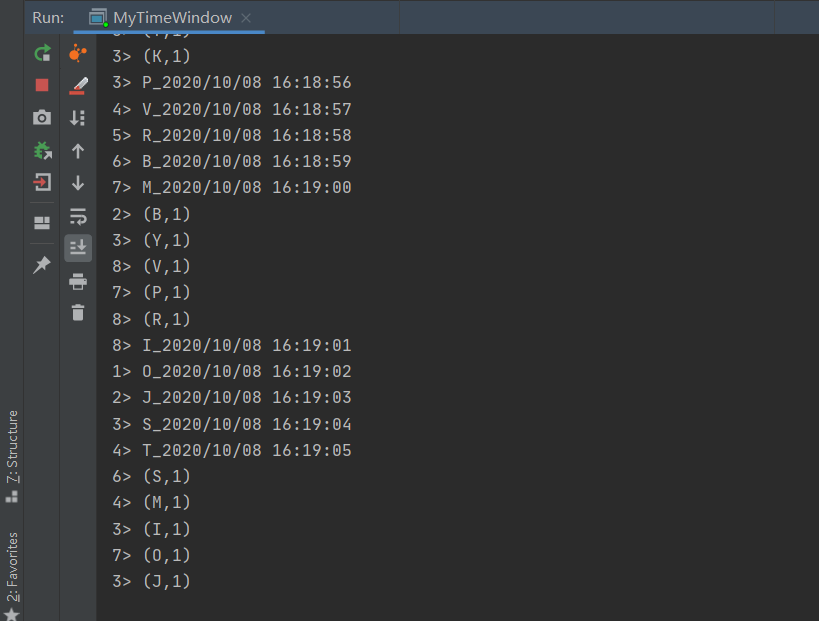

我们可以在这里面看到M在第下一计算中,基于时间范围内的为左闭右开

基于数量的窗口

package com.hph.window;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Random;

public class MyCountWindow {

private static transient KafkaConsumer<String, String> consumer;

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口 每5秒滑动一次

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

}).countWindow(2).sum(1).print();

environment.execute("MyWaterMarkAndTimestamps");

}

}

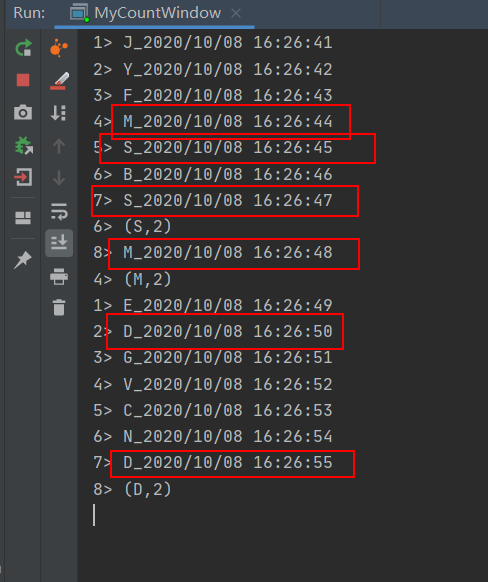

这里之前将数据按照key分组了,因此当满足key出现2次后即触发计算。

滚动窗口

滚动窗口是按照固定时间或者大小进行切分,窗口和窗口之间的元素互不重叠,该类型窗口比较简单,可能会导致前后有关系的数据计算结果不准确,对于按照固定大小或者周期统计某一指标的计算比较合适。

package com.hph.window;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Random;

public class MyTumblingWindows {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口 每5秒滚动一次

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

}).window(TumblingEventTimeWindows.of(Time.seconds(5))).sum(1).print();

environment.execute("MyTumblingWindows");

}

}

计算结果如图所示,可以看到只是简单的切分时间并对时间范围进行聚合计算,时间范围我们可以通过计算结果看到也是左闭右开。

值得注意的是Flink在使用时间的这个概念的时候就是基于时间纪元这个概念的。比如首先,我们的时区是东八区,在我们的认知中UTC-0时间应该加8小时的offset,才是我们看到的时间,所以在使用flink的窗口的时候往往比我们当前的时间少8小时。

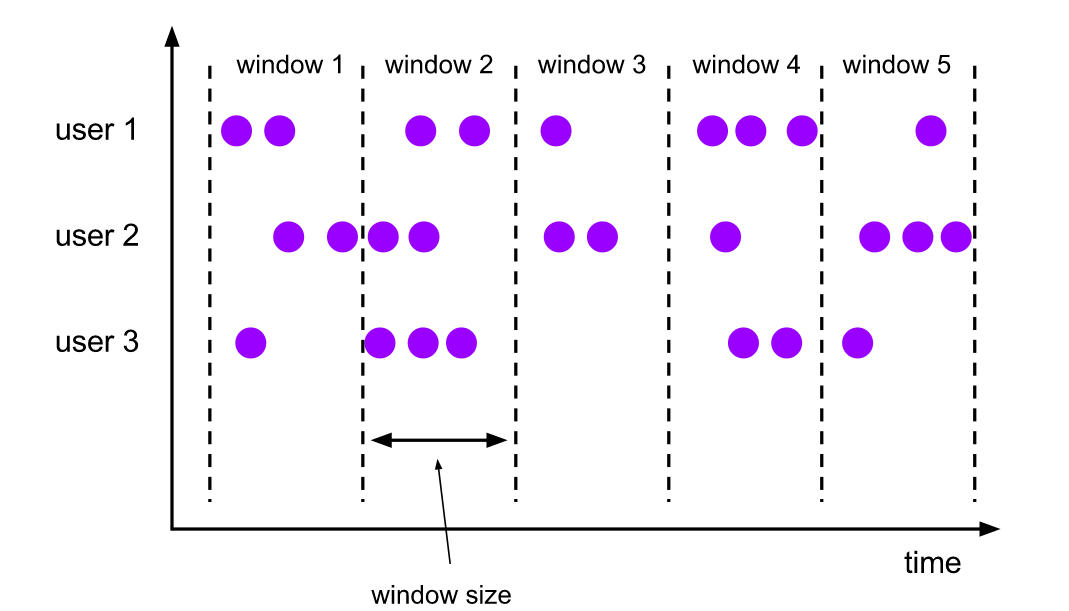

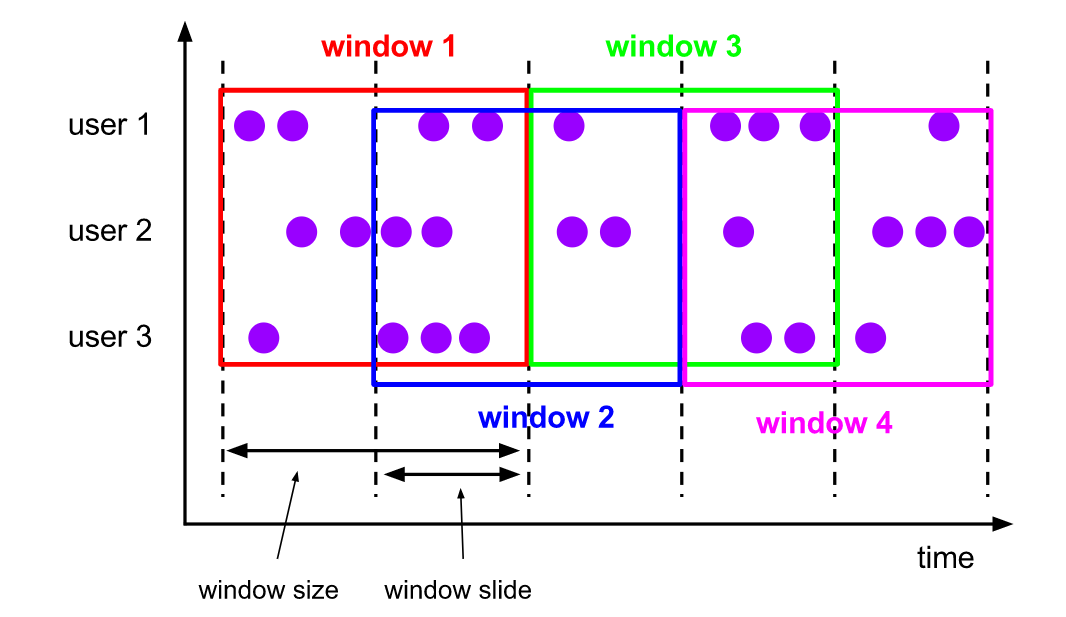

滑动窗口

滑动窗口是在滚动窗口的基础上增加了窗口滑动时间,且允许窗口的数据发生重叠,滑动窗口按照Window slide向前移动,数据重叠部分由Window size和Window slide共同决定。如果Window slide 和Window size相同那就是滚动窗口,如果Window slide size 大于Winow Size就会出现窗口不连续,滑动窗口可以帮助用户根据设定的统计频率计算制定窗口大小的统计指标,比如每隔30s统计最近10min的活跃用户等。

package com.hph.window;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.assigners.SlidingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Random;

public class MySlidingWindow {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口 每5秒滑动一次

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

//设定步长和滑动时间

}).window(SlidingEventTimeWindows.of(Time.seconds(4), Time.seconds(2))).sum(1).print();

environment.execute("MySlidingWindow");

}

}

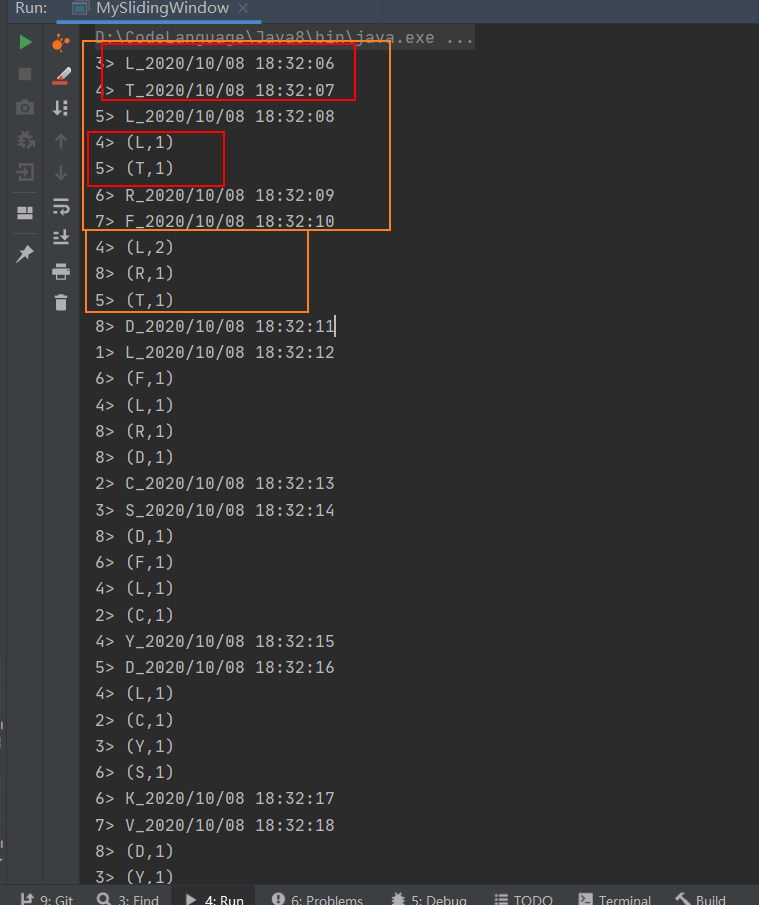

上面简单实现了一个滑动窗口为4s,滑动步长为2s的滑动窗口。

从计算结果我们可以看出来,当数据时出现2s后触发了计算,不过在当时间过了4s一个窗口时触发了计算,在此之后窗口的范围为[9-12) s,时间包含了之前的数据。

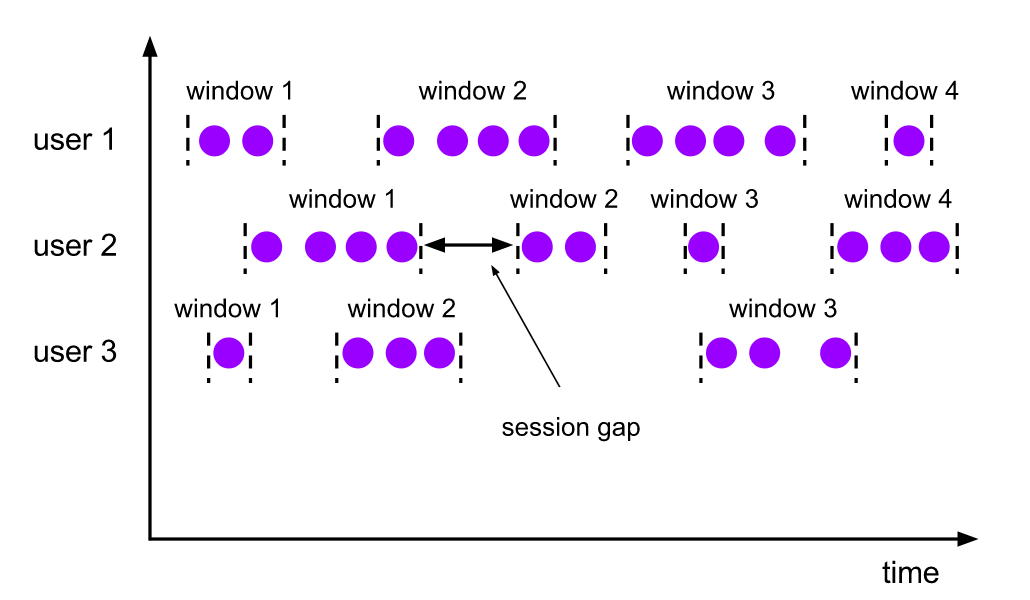

会话窗口

会话窗口主要是将某段时间内活跃度较高的数据聚合承一个窗口进行计算,窗口触发条件为Session Gap及Session时间间隔,如果在规定时间内没有数据活跃那么就认为窗口结束,然后触发计算。Session Windows窗口比较适合于非连续型数据处理或者周期性产生数据的场景,可以根据用户在线上的某一段时间内的活跃度对用户行为进行统计分析。

package com.hph.window;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.assigners.ProcessingTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Random;

public class MySessionWindows {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

}).window(EventTimeSessionWindows.withGap(Time.seconds(5))).sum(1).print();

environment.execute("MyTumblingWindows");

}

}

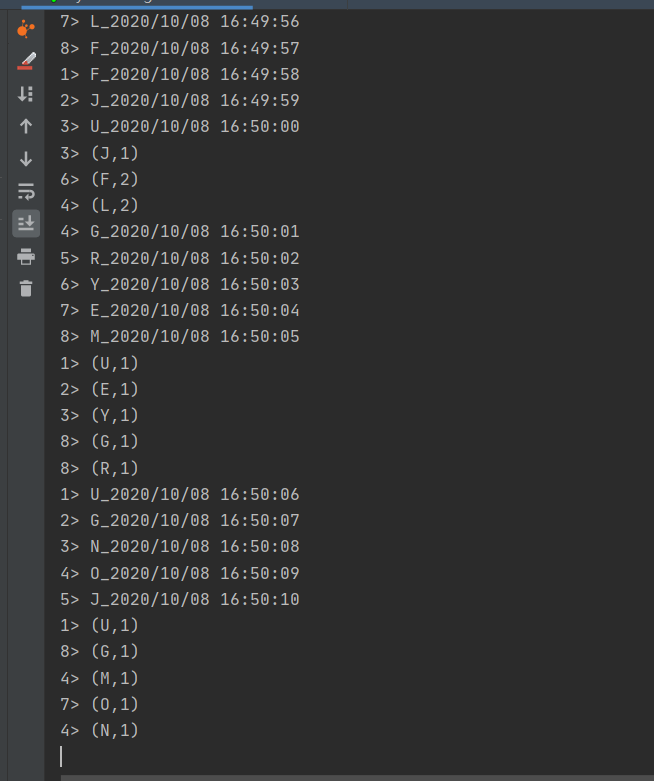

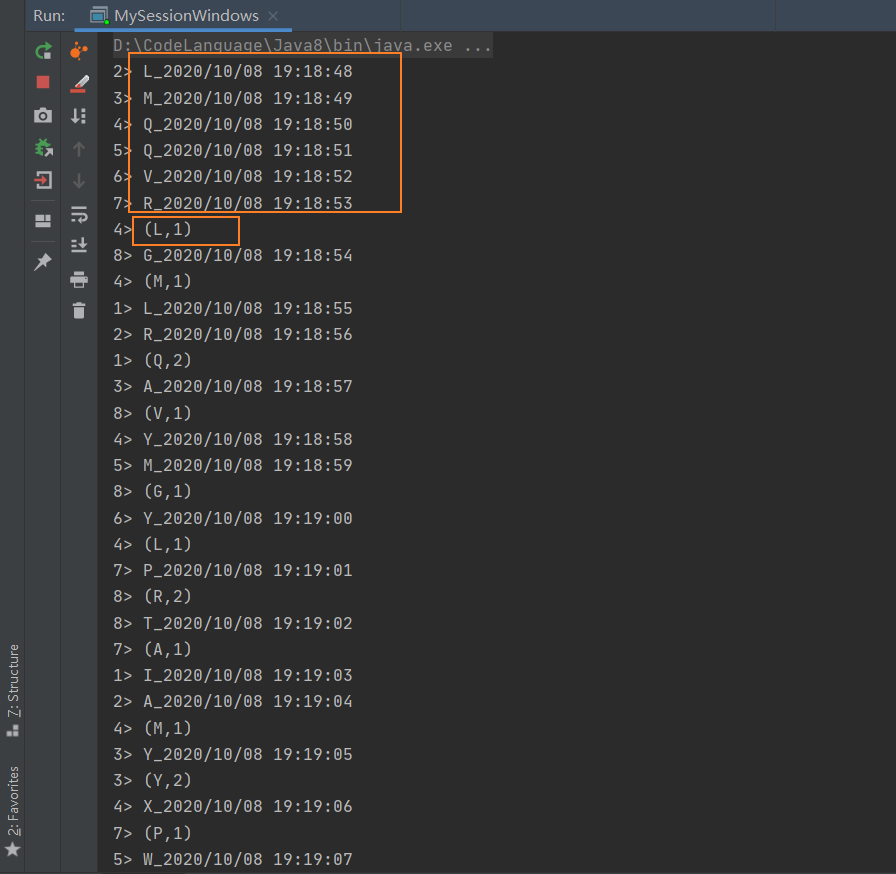

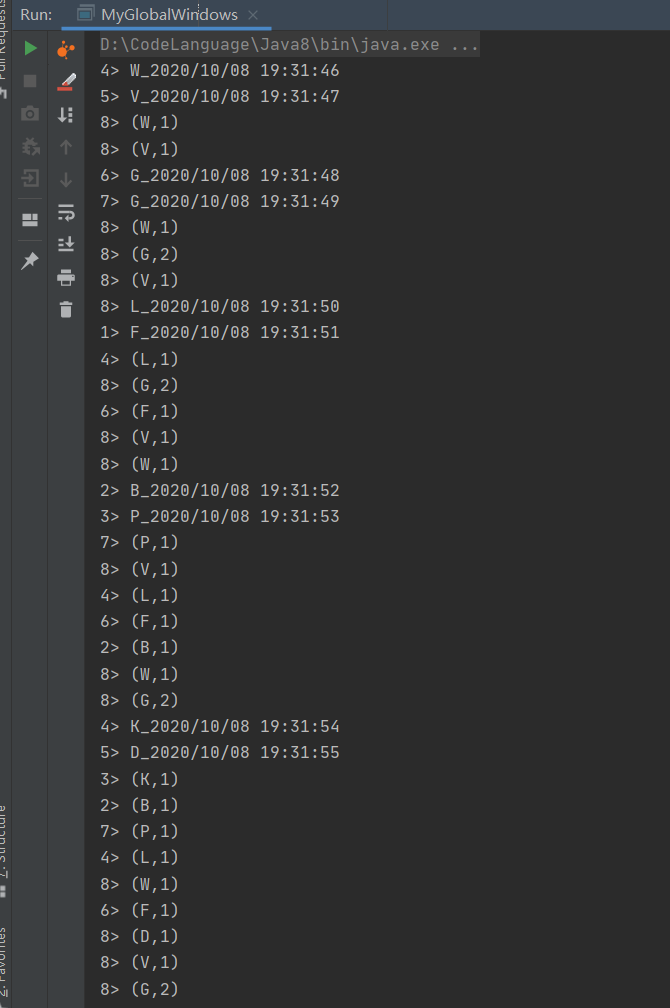

从图中和计算结果中我们分析可得,在5s中之内如果数据没有新增,那么我们可以认为这一段会话是结束的,我们这里规定的不活跃的周期为5s,从而计算结果中,当计算结果出现的时候,我们向前5s即可找到原来的数据。

全局窗口

全局窗口是将所有相同的key的数据分配到单个窗口中去计算,窗口没有起止日期,需要借助与Triger来触发计算,如果对Global Windows没有指定Triger,窗口不会触发计算。因此用户需要明确在整个窗口中统计出的结果是什么,并指定对应的触发器。

package com.hph.window;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import org.apache.flink.streaming.api.watermark.Watermark;

import org.apache.flink.streaming.api.windowing.assigners.EventTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.assigners.GlobalWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.triggers.ContinuousProcessingTimeTrigger;

import org.apache.flink.streaming.api.windowing.triggers.Trigger;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Random;

public class MyGlobalWindows {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment environment = StreamExecutionEnvironment.getExecutionEnvironment();

environment.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

DataStreamSource<String> dataStreamSource = environment.addSource(new RichSourceFunction<String>() {

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

}

boolean isRunning = true;

@Override

public void run(SourceContext<String> ctx) throws Exception {

//随机产生数据为 A-Z附带时间戳

while (isRunning) {

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy/MM/dd HH:mm:ss");

Thread.sleep(1000);

char i = (char) (65 + new Random().nextInt(25));

long timeMills = (System.currentTimeMillis());

//格式化数据为A_Z的字符 + 时间

String element = i + "_" + simpleDateFormat.format(new Date(timeMills));

//设置时间戳

ctx.collectWithTimestamp(element, timeMills);

//水印时间=时间戳+0S

ctx.emitWatermark(new Watermark(timeMills + 0));

}

}

@Override

public void cancel() {

isRunning = false;

}

});

dataStreamSource.print();

//将数据转化为 Tuple2 之后对数据进行分组,同是创建一个窗口 每5秒滑动一次

dataStreamSource.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String word = value.split("_")[0];

return Tuple2.of(word, 1);

}

}).keyBy(new KeySelector<Tuple2<String, Integer>, String>() {

@Override

public String getKey(Tuple2<String, Integer> value) throws Exception {

return value.f0;

}

//指定触发器为2s触发计算

}).window(GlobalWindows.create()).trigger(ContinuousProcessingTimeTrigger.of(Time.seconds(2))).sum(1).print();

environment.execute("MyGlobalWindows");

}

}

其中每隔2s便会触发1次计算从而得到全局的统计结果以上就是关于Flink窗口的简单介绍。